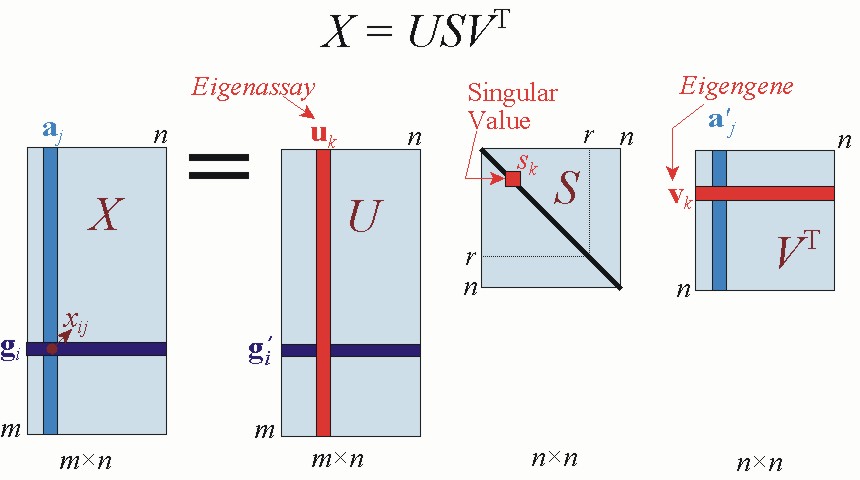

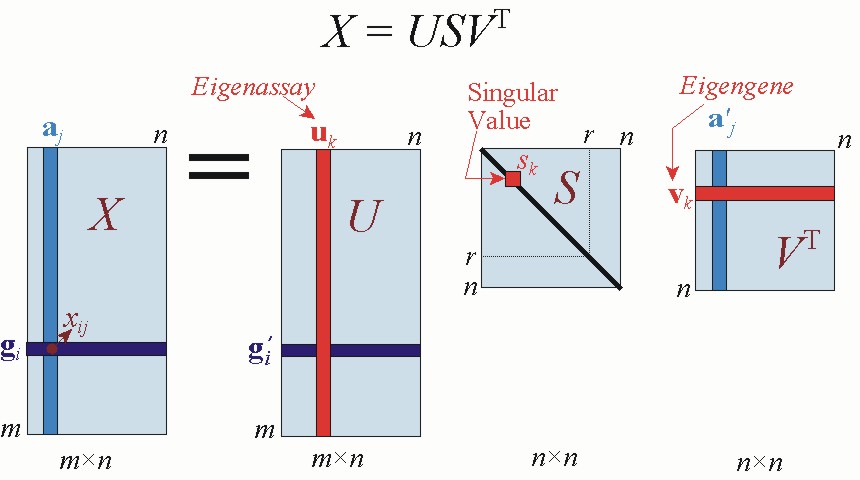

In terms of data compression, both domains offer competitive approaches depending on the case, there is no one better than the other. The more vertical and horizontal variability in the image the more components you'll need in order to reproduce the image. SVD provides a linear decomposition of the vertical and horizontal features of an image, in which each of the components (ranks) can contain high and low frequencies. To sum up, the relationship between SVD and spectral decomposition of images is vague. The result for the previous image would be a low frequency version which looks like a "blurry" version of the initial image: The "equivalent" operation to lower the rank in SVD decomposition would be to apply a low pass filter in the frequency domain. If we now look at the transpose of the first image $A^\intercal$, $U$ and $V$ change places in the equation following the rules of transposition of products and we'll have the opposite case: we only need to keep the first column of $V$ to recover the image ( $U$ doesn't contain any information, as the image has no variability in the vertical axis). Which means that we only need to keep the first row of $U$ to recover the image ( $V$ doesn't contain any information, as the image has no variability in the horizontal axis). For the previous image we have a perfect decomposition for k-rank equals to 1. $U$ operates in the column space of $A$ and $V$ in its row space. I'll use the $A = USV^\intercal$ notation for SVD (notice that $U$ is actually a different matrix to $V^\intercal$ as in your notation). To illustrate the point let's see how both approaches are applied to a very simple image: SVD performs a decomposition based on the spatial structure of a matrix (image) whereas a spectral filters look at its frequency components. The similarity between both techniques stops there as they operate over different domains. In this sense both SVD and image filtering perform a decomposition on images based on a change of basis. Image filtering (in the frequency domain) is performed by decomposing an image in its frequency components and removing part of the spectrum.

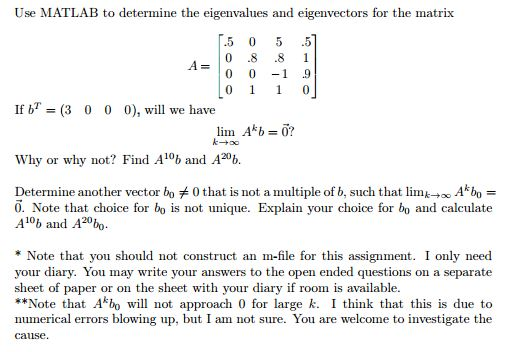

Do the smaller eigenvalues contribute to high-frequency components of the signal? So is the compression algorithm acting like a low pass filter and depending on the threshold set is essentially stopping the high frequency signals to pass through and basically acting as a smoothing oiperator or is there no relationship there? The main question that I have and this relates to eigenvalue decomposition as well as SVD is whether there is some relationship to the frequency content of the signal. In this case, the smaller eigenvalues will have a relatively shrinking effect on the rows of $V^$ and will overall contribute less. So, I can perform compression using eigenvalue decomposition by setting the eigenvalues under some threshold to 0. Where $V$ is the matrix where each column corresponds to an eigenvector of $A$ and $D$ is the diagonal matrix where the diagonal entry corresponds to the corresponding eigenvector. MATLAB Demonstration of SVD Forward multiplication. So, the eigenvalue decomposition of a square matrix can be written as: The SVD factorization of a matrix A generates a set of eigenvectors for both the. So, before we discuss SVD, I want to check if my understanding of eigenvalue decomposition is correct. These plots show some of the singular values of west0479 as computed by svd and svds.I have been studying the SVD algorithm recently and I can understand how it might be used for compression but I am trying to figure out if there is a perspective of SVD where it can be seen as a low pass filter. svds picks out the largest and smallest singular values. svds(A,k,0) uses eigs to find the 2k smallest magnitude eigenvalues and corresponding eigenvectors of B =, and then selects the k positive eigenvalues and their eigenvectors.Įxample west0479 is a real 479-by-479 sparse matrix. U*S*V' is the closest rank k approximation to AĪlgorithm svds(A,k) uses eigs to find the k largest magnitude eigenvalues and corresponding eigenvectors of B =.With three output arguments and if A is m-by- n : With one output argument, s is a vector of singular values. svds(A,k,0)Ĭomputes the k smallest singular values and associated singular vectors. Computes the five largest singular values and associated singular vectors of the matrix A.Ĭomputes the k largest singular values and associated singular vectors of the matrix A.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed